How to Format the Google Disavow List

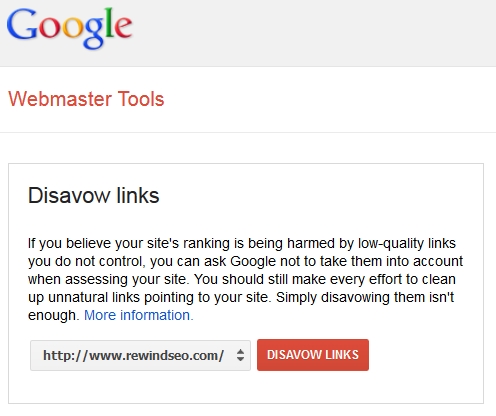

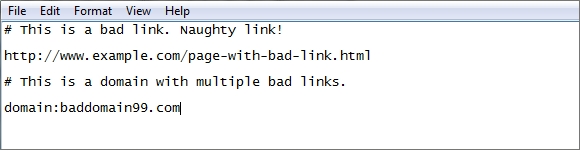

If you wish to create a disavow list or modify your existing disavow, you must understand how it is formatted in order not to mess anything up. On the other hand, the format is very simple. The disavow list can contain a combination of comments, individual URLs, and entire domains to be disavowed. It will look something like this:

The disavow can contain individual URL page entries or entire domains.

The disavow can contain individual URL page entries or entire domains.

Disavow Format

Each entry needs to be on a new line. There are 3 types of entries:

-

“#” for lines with comments. This is for your own reference and/or notes or documentation in reconsideration request (manual penalty) disavow. You can add as many lines of comments as you need; each line must start with #.

-

Use a full URL on a new line to block that specific page.

-

Use “domain:” followed by a root or sub-domain to block every link from that domain. Obviously this is for cases where you are certain you don’t want links from that domain.

Notes for Domain Disavow (domain:BadDomain.com)

‣ Disavowing a root domain WILL disavow all subdomains. E.g. “domain:blogspot.com” will disavow blog123.blogspot.com and blog456.blogspot.com. This is previously a detail we had wrong (along with much of the rest of the internet) but has been confirmed by John Mueller from Google. On the other hand, disavowing a subdomain specifically, like “domain:blog123.blogspot.com” will NOT disavow the root domain (blogspot.com) or any other subdomains.

‣ Disavowing by domain must NOT have “http://” or “www.” It must simply be plain domain:domain-name.com or else domain:sub-domain.top-domain.com.

‣ Disavowing bad links by domain is the method we recommend for almost all cases. This ensures other bad links you may not be aware of are disavowed as well as all future links from that domain and links on dynamic pages (with changing names) like on many forum profile lists and SEO link directories.

If you need to submit the list visit this quick Guide to Submitting the Disavow List.

About Daniel Delos

Daniel is the founder of Rewind SEO and has worked on hundreds of Google penalty analyses and recovery projects, recovering both manual and algorithm penalties. He has almost two decades of total SEO experience and has worked almost exclusively on risk auditing and penalty prevention/recovery since 2014.

- Web |

- More Posts(14)